Our evaluation of thirteen large language models revealed significant performance variations across both medical MCQ answering and clinical scenario generation. In MCQ assessment, ChatGPT o1 demonstrated the highest accuracy (96.31%), followed by ChatGPT 4.5 (92.62%), while DeepSeek showed superior consistency (ICC = 0.986). All models significantly outperformed random guessing (p < 0.0001) with large effect sizes (Cohen’s d ranging from 1.540 to 3.799). For clinical scenario generation, Claude 3.5 achieved the highest overall score (91.4% of maximum possible). Statistical analysis confirmed significant differences between models in both tasks (MCQs: F = 6.243, p < 0.000001, partial η2=0.066; scenarios: F(12,455) = 8.48, p = 1.39 × 10⁻¹⁴). Table 2 shows performance of LLMs across Medical MCQ Answering and Clinical Scenario Generation Tasks.

MCQ performance analysis

Overall accuracy

Model accuracy on medical multiple-choice questions varied substantially, with performance rates ranging from 72.31 to 96.31% (Fig. 4). ChatGPT-o1 demonstrated the highest accuracy (96.31 ± 17.85%), followed by ChatGPT-4.5 (92.62 ± 23.94%) and Claude-3.7-E (89.85 ± 24.84%). The lowest performing models were QwQ-3B (72.31 ± 40.72%), Qwen-2.5-M (72.92 ± 39.76%), and DeepSeek (75.08 ± 42.10%). For reference, the random guessing baseline achieved 22.46 ± 20.90% accuracy. All models significantly outperformed random guessing as determined by one-tailed t-tests (all p < 0.0001). Effect sizes were uniformly large, with Cohen’s d values ranging from 1.540 (QwQ-3B vs. random) to 3.799 (ChatGPT-o1 vs. random). Repeated measures ANOVA confirmed significant differences between models (F = 6.243, p < 0.000001, partial η2=0.066), though post-hoc Tukey HSD testing identified no significant pairwise differences between individual LLMs.

Model accuracy on medical MCQs with 95% confidence intervals. Bar chart displaying the percentage accuracy (mean ± 95% confidence intervals) for all 13 LLMs compared to random guessing baseline. ChatGPT-o1 achieved highest accuracy (96.31%), followed by ChatGPT-4.5 (92.62%), with all models significantly outperforming random guessing (p < 0.0001).

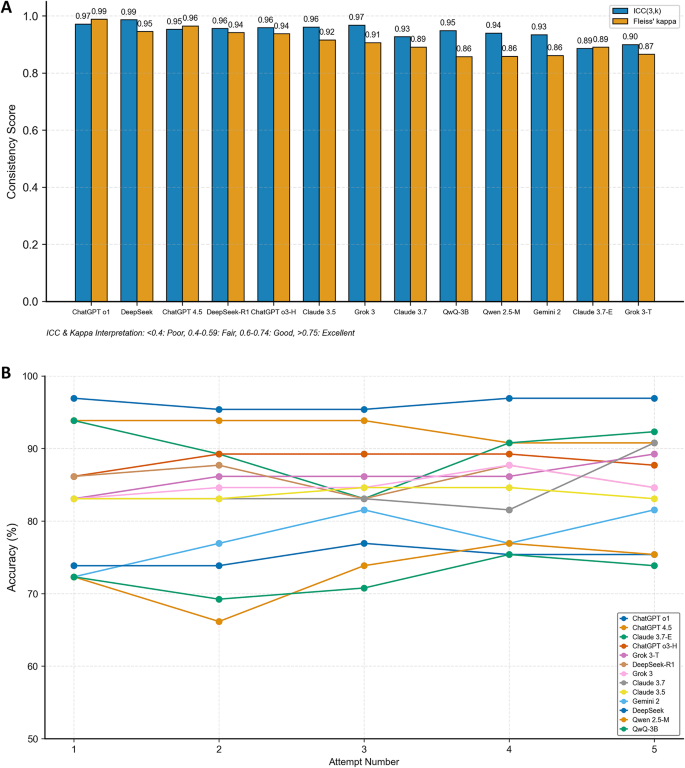

Consistency analysis

Model consistency was assessed using intraclass correlation coefficient (ICC) and Fleiss’ kappa (Fig. 5A). DeepSeek exhibited the highest consistency (ICC = 0.986 (0.978, 0.992); κ = 0.945), followed by ChatGPT-o1 (ICC = 0.971 (0.954, 0.983); κ = 0.988) and Grok-3 (ICC = 0.968 (0.948, 0.980); κ = 0.906). The newly evaluated ChatGPT-4.5 demonstrated excellent consistency (ICC = 0.953 (0.923, 0.971); κ = 0.965), as did QwQ-3B (ICC = 0.949 (0.917, 0.969); κ = 0.858). All models demonstrated excellent consistency (ICC > 0.85). Performance stability across multiple attempts was measured using coefficient of variation (CV). ChatGPT-o1 (CV = 0.8%) and Claude-3.5 (CV = 0.9%) showed the most stable performance, while ChatGPT-4.5 (CV = 1.6%) and QwQ-3B (CV = 3.0%) exhibited good to excellent stability. Qwen-2.5-M demonstrated the highest variability (CV = 5.1%) among all models. Performance across attempts was highly consistent, with accuracy ranges across five attempts varying from 1.5% points (ChatGPT-o1 and Claude-3.5) to 10.7% points (Claude-3.7-E and Qwen-2.5-M). For the newer models, ChatGPT-4.5 showed a range of 3.0% points across attempts, while QwQ-3B exhibited a range of 6.2% points. The pattern of accuracy across all five attempts for each model is illustrated in Fig. 5B, highlighting the remarkable consistency of some models despite multiple independent trials.

(A) Consistency metrics across LLMs. Bar chart comparing intraclass correlation coefficients (ICC) and Fleiss’ kappa values across all models, with DeepSeek demonstrating highest consistency (ICC = 0.986, κ = 0.945) followed by ChatGPT-o1 (ICC = 0.971, κ = 0.988). (B) Accuracy stability across multiple attempts for all models. Line graph showing accuracy percentages across five independent attempts for each model, highlighting remarkable stability in some models despite multiple trials, with ChatGPT-o1 and Claude-3.5 showing lowest variation (CV = 0.8% and 0.9% respectively).

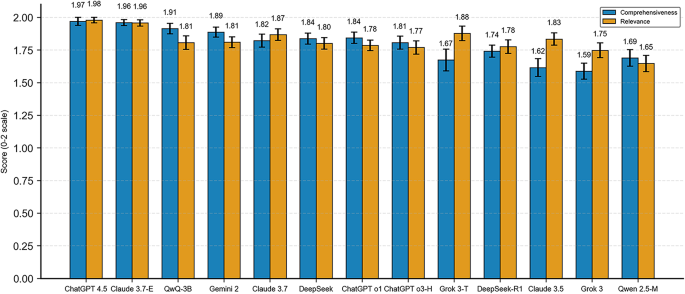

Explanation quality assessment

Explanation quality varied across models based on comprehensiveness and relevance metrics (Fig. 6). According to the validated data, ChatGPT-4.5 provided the highest quality explanations (comprehensiveness: 1.97 ± 0.12; relevance: 1.98 ± 0.09), followed by Claude-3.7-E (comprehensiveness: 1.96 ± 0.09; relevance: 1.96 ± 0.10) and QwQ-3B (comprehensiveness: 1.91 ± 0.17; relevance: 1.81 ± 0.22). Spearman rank correlation analysis revealed significant positive correlations between explanation quality and answer accuracy for most models. The strongest correlations were observed for Grok-3-T (relevance vs. accuracy: ρ = 0.745, p < 0.0001), ChatGPT-4.5 (comprehensiveness vs. accuracy: ρ = 0.525, p < 0.0001; relevance vs. accuracy: ρ = 0.525, p < 0.0001), and Claude-3.7 (comprehensiveness vs. accuracy: ρ = 0.528, p < 0.0001). Only ChatGPT-o1’s relevance scores showed no significant correlation with accuracy (ρ = 0.056, p = 0.3126). For QwQ-3B, moderate correlations were observed (comprehensiveness vs. accuracy: ρ = 0.376, p < 0.0001; relevance vs. accuracy: ρ = 0.288, p < 0.0001). ANOVA testing confirmed significant differences in explanation quality metrics between models (both p < 0.0001).

Explanation quality metrics across models with 95% confidence intervals. Dual-metric visualization of comprehensiveness and relevance scores (0–2 scale) with 95% confidence intervals for all LLMs. ChatGPT-4.5 provided highest quality explanations (comprehensiveness: 1.97 ± 0.12; relevance: 1.98 ± 0.09).

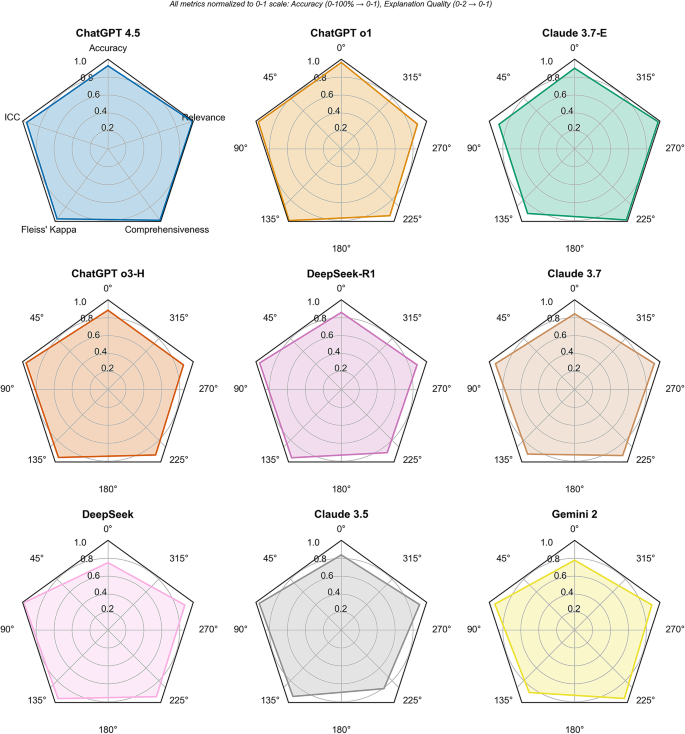

Model-specific findings

Comparative analysis of individual models revealed distinct performance patterns across accuracy, consistency, and explanation quality metrics (Fig. 7). ChatGPT-o1 emerged as the overall top performer, achieving the highest accuracy (96.31%) with excellent consistency (ICC = 0.971, Fleiss’ kappa = 0.988) and remarkable stability across attempts (CV = 0.8%). Its explanations demonstrated high quality (comprehensiveness: 1.84 ± 0.18, relevance: 1.78 ± 0.17), with comprehensiveness showing a significant correlation with accuracy (ρ = 0.185, p = 0.0008). ChatGPT-4.5, the newer ChatGPT variant, ranked second in accuracy (92.62%) with excellent consistency (ICC = 0.953, Fleiss’ kappa = 0.965) and strong stability (CV = 1.6%), while providing the highest quality explanations among all models (comprehensiveness: 1.97 ± 0.12, relevance: 1.98 ± 0.09) with significant correlations between both explanation metrics and accuracy (both ρ = 0.525, p < 0.0001). ChatGPT-o3-High achieved lower accuracy (88.31%) but maintained excellent consistency (ICC = 0.959, Fleiss’ kappa = 0.938) and strong attempt stability (CV = 1.4%), with both explanation quality metrics significantly correlating with accuracy. Claude-3.7-E ranked third in accuracy (89.85%) and provided high-quality explanations (comprehensiveness: 1.96 ± 0.09, relevance: 1.96 ± 0.10), though it demonstrated lower consistency (ICC = 0.886) compared to other models. Its standard counterpart, Claude-3.7, achieved lower accuracy (84.31%) but higher consistency (ICC = 0.927), while Claude-3.5 showed comparable accuracy (83.69%) with the highest consistency among Claude variants (ICC = 0.961, CV = 0.9%). The Grok models performed similarly in accuracy (Grok-T: 86.15%, Grok: 84.92%), with Grok demonstrating higher consistency (ICC = 0.968 vs. 0.900) but Grok-T showing stronger correlations between relevance and accuracy (ρ = 0.745, p < 0.0001). Among the DeepSeek variants, DeepSeek-R1 substantially outperformed the base model in accuracy (85.85% vs. 75.08%), though the base model achieved the highest consistency score among all LLMs (ICC = 0.986, Fleiss’ kappa = 0.945). Both variants maintained similar relevance scores (DeepSeek-R1: 1.78 ± 0.21, DeepSeek: 1.80 ± 0.18), with comparable comprehensiveness-accuracy correlations. Gemini demonstrated moderate accuracy (77.85%) but provided high-quality explanations (comprehensiveness: 1.89 ± 0.16, relevance: 1.81 ± 0.17) with excellent consistency (ICC = 0.934). QwQ-3B, despite having the second-lowest accuracy (72.31%), maintained excellent consistency (ICC = 0.949, Fleiss’ kappa = 0.858) with high explanation quality (comprehensiveness: 1.91 ± 0.17, relevance: 1.81 ± 0.22) and significant quality-accuracy correlations (ρ = 0.376 and ρ = 0.288, p < 0.0001). Qwen-2.5-M exhibited the third-lowest accuracy (72.92%) but maintained excellent consistency (ICC = 0.940) with moderate explanation quality (comprehensiveness: 1.69 ± 0.26, relevance: 1.65 ± 0.26) and significant quality-accuracy correlations.

Normalized performance profiles across LLMs. Radar chart showing relative performance across multiple metrics (accuracy, consistency, explanation quality) for each model, enabling multi-dimensional comparison of strengths and weaknesses.

Analysis of LLM-generated clinical scenarios

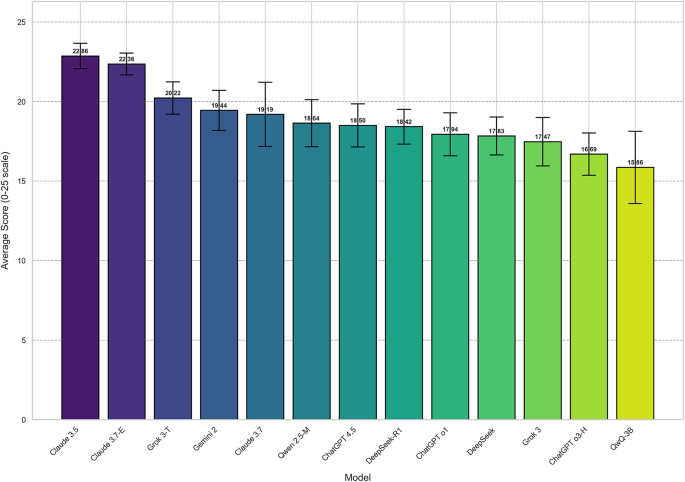

Overall performance and dimensional analysis

The substantial performance gradient across models is evident in their total scores (Fig. 8), with Claude-3.5 and Claude-3.7-E demonstrating markedly superior performance compared to other models, and notable variability in performance consistency as indicated by the confidence intervals. Clinical scenario generation performance varied significantly across models (F(12,455) = 8.48, p < 0.0001, η2=0.1828). Claude-3.5 achieved the highest overall score (91.4% of maximum possible, mean = 22.86 ± 2.37), followed by Claude-3.7-E (89.4%, mean = 22.36 ± 2.02) and Grok-3-T (80.9%, mean = 20.22 ± 3.01). The newly evaluated ChatGPT-4.5 achieved moderate performance (74.0%, mean = 18.50 ± 4.00), ranking seventh overall, while QwQ-3B demonstrated the lowest performance among all models (63.4%, mean = 15.86 ± 6.71) with the highest variability. The other lowest-performing models were ChatGPT-o3 (66.8%, mean = 16.69 ± 3.93) and Grok-3 (69.9%, mean = 17.47 ± 4.50). Tukey’s HSD post-hoc testing identified Claude-3.5 as significantly outperforming nine other models (p < 0.05), except Claude-3.7-E and Grok-3-T. Model performance varied across the five evaluation dimensions: Quality, Complexity, Relevance, Correctness, and Variety (Fig. 9). Correctness emerged as the strongest dimension for nine models including both new additions (ChatGPT-4.5: 4.78 ± 0.30; QwQ-3B: 4.31 ± 0.55), while Quality represented the weakest aspect for ten models including QwQ-3B (2.33 ± 0.50), with ChatGPT-4.5 showing Complexity as its weakest dimension (3.06 ± 0.53). Claude models demonstrated robust performance in Quality (Claude-3.5: 4.14 ± 0.30; Claude-3.7-E: 4.25 ± 0.25) and Complexity (Claude-3.5: 4.75 ± 0.25; Claude-3.7-E: 4.53 ± 0.31), while ChatGPT models showed notable weaknesses in these areas (ChatGPT-o1 Quality: 2.17 ± 0.47; ChatGPT-o3 Quality: 1.97 ± 0.46). Kruskal-Wallis tests identified significant differences between models across all dimensions (p < 0.0001 for Quality, Complexity, Relevance, and Variety; p = 0.0078 for Correctness). Effect sizes were large for Quality (η2=0.2346), Complexity (η2=0.2549), Relevance (η2=0.1583), and Variety (η2=0.1456), with Correctness showing a medium effect (η2=0.0633). Correlation analysis revealed strong associations between Quality and Complexity (r = 0.755) and between Complexity and Variety (r = 0.560).

Overall clinical scenario generation performance across LLMs with 95% confidence intervals. Bar chart showing overall performance scores (with 95% confidence intervals) for clinical scenario generation tasks. Claude-3.5 achieved highest overall score (91.4% of maximum possible, mean = 22.86 ± 2.37), followed by Claude-3.7-E (89.4%).

Dimensional performance heatmap for clinical scenario generation. Color-coded heatmap visualizing model performance across five evaluation dimensions (Quality, Complexity, Relevance, Correctness, Variety), revealing Correctness as the strongest dimension for most models and Quality as the weakest.

Contextual performance analysis

Contextual performance analysis

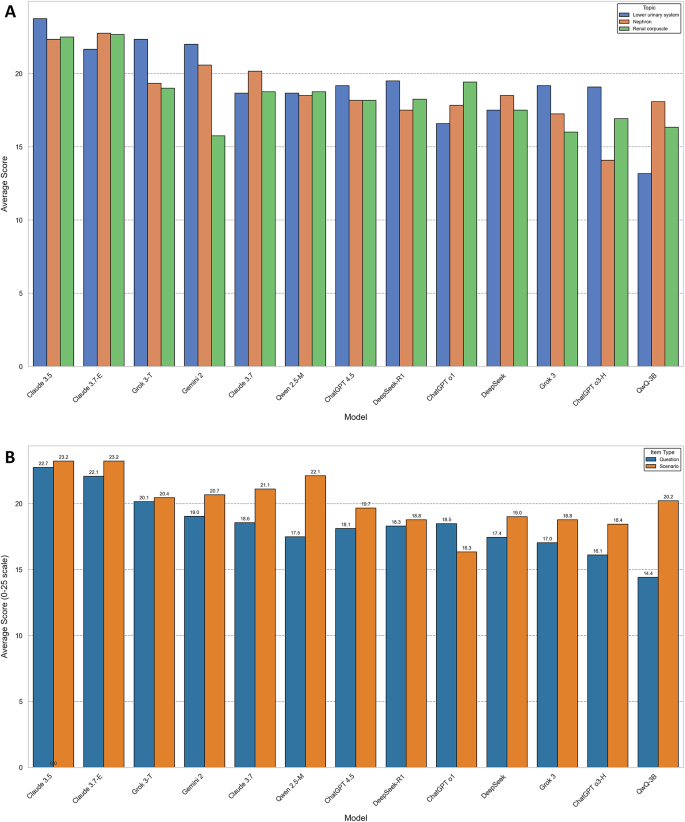

Performance across the three histology topics (renal corpuscle, nephron, and lower urinary system) showed topic-specific strengths (Fig. 10A). Claude-3.5 maintained consistent excellence across all topics (lower urinary: 23.75 ± 1.86; nephron: 22.33 ± 3.26; renal corpuscle: 22.50 ± 1.57), while Gemini-2 displayed marked variability, performing strongly on lower urinary topics (22.00 ± 1.76) but considerably weaker on renal corpuscle content (15.75 ± 3.65). The newly evaluated ChatGPT-4.5 demonstrated moderate consistency across topics (lower urinary: 19.17 ± 4.71; nephron: 18.17 ± 3.51; renal corpuscle: 18.17 ± 3.95), while QwQ-3B showed substantial topic-dependent variability (lower urinary: 13.17 ± 9.70; nephron: 18.08 ± 4.91; renal corpuscle: 16.33 ± 3.39), exhibiting particularly poor performance on lower urinary system topics. ANOVA identified a significant interaction effect between models and topics (F(24,429) = 1.98, p = 0.0043), indicating differential performance based on topic. Comparing scenario creation versus MCQ generation (Fig. 10B) revealed that eleven of thirteen models achieved higher scores on scenario creation than question formulation, with this difference reaching statistical significance for Qwen-2.5-M (p = 0.0043) and QwQ-3B (p = 0.0221). ChatGPT-4.5 followed this pattern with higher scenario scores (scenario: 19.67 ± 3.81; question: 18.11 ± 4.05) though the difference was not statistically significant (p = 0.3189). QwQ-3B demonstrated the most substantial performance disparity between scenario creation and question formulation (scenario: 20.22 ± 5.21; question: 14.41 ± 6.59). These findings suggest most LLMs may find generating coherent clinical scenarios somewhat easier than formulating appropriate assessment questions, with this pattern particularly pronounced in certain models.

(A) Contextual performance analysis: Performance by histology topic. Bar chart comparing model performance across three histological topics (renal corpuscle, nephron, lower urinary system), showing significant topic-dependent variability, particularly in models like Gemini-2 and QwQ-3B. (B) Contextual performance analysis: Performance by item type. Bar chart comparing performance in scenario creation versus question formulation tasks, showing 11 of 13 models achieved higher scores on scenario creation than question formulation, with QwQ-3B showing the largest disparity.

Model family comparison

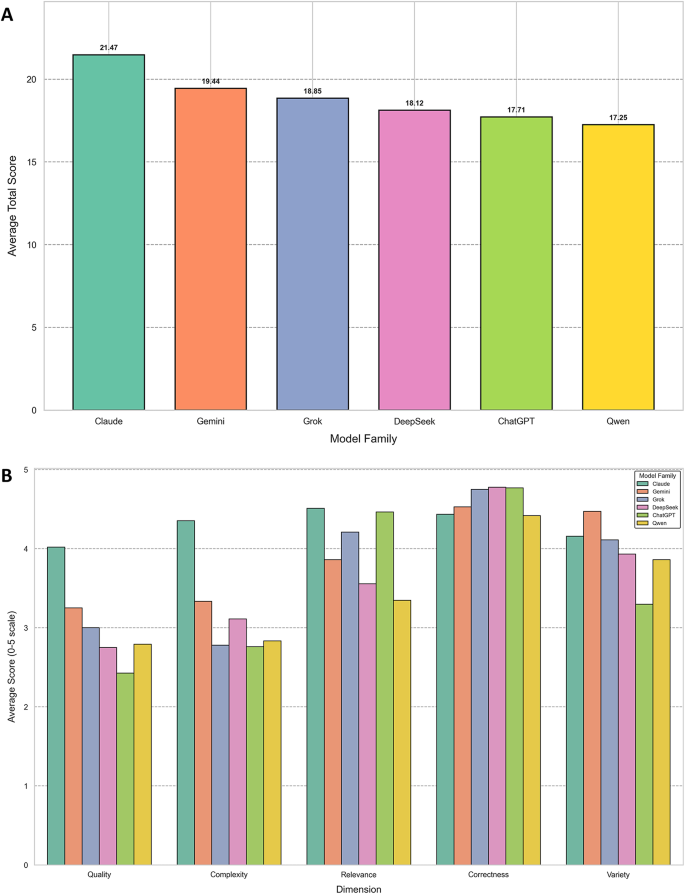

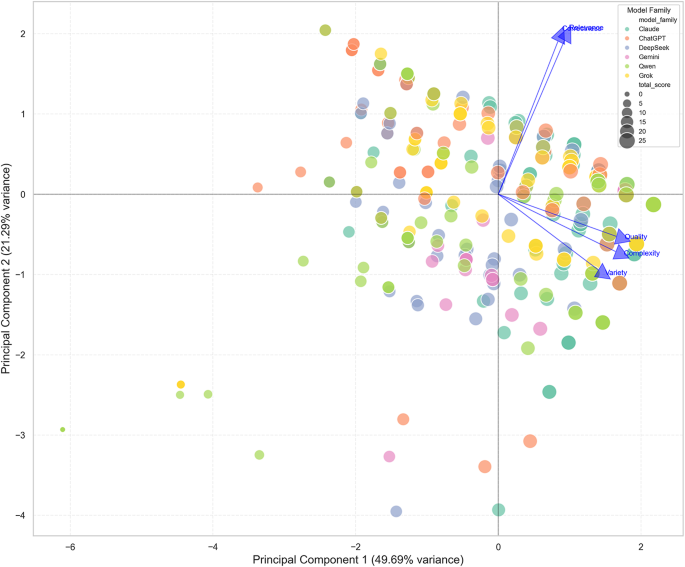

When grouped by provider family, Claude models (Claude-3.5, Claude-3.7, Claude-3.7-E) demonstrated superior overall performance (mean = 21.47 ± 4.18), followed by Gemini (19.44 ± 3.73), Grok (18.85 ± 4.04), DeepSeek (18.13 ± 3.36), ChatGPT (17.71 ± 4.01), and Qwen (17.25 ± 5.80) families (Fig. 11A). With the inclusion of ChatGPT-4.5 and QwQ-3B, the overall ranking of model families remained consistent, though the performance gap between ChatGPT and Qwen families narrowed. ANOVA confirmed significant differences between model families (F(5,462) = 12.25, p < 0.0001). Tukey’s HSD post-hoc analysis revealed that the Claude family significantly outperformed ChatGPT (p < 0.0001), DeepSeek (p < 0.0001), Grok (p = 0.0009), and Qwen (p < 0.0001) families. Dimensional analysis by family (Fig. 11B) showed that ChatGPT models excelled in Correctness (4.77) and Relevance (4.46) despite low Quality scores (2.43), while Claude models demonstrated more balanced performance across all dimensions. The Qwen family, which now includes QwQ-3B, showed the lowest performance in Relevance (3.35) with moderate scores in Correctness (4.42) and Variety (3.86). Principal Component Analysis revealed that 70.98% of variance in model performance could be explained by the first two principal components, with PC1 (49.69% of variance) primarily associated with Quality (0.553) and Complexity (0.549). This analysis further confirmed that Quality and Complexity represent the most discriminative dimensions for evaluating clinical scenario generation capabilities. The PCA visualization (Fig. 12) illustrates how models cluster in performance space, with ChatGPT-4.5 positioned closer to the center of the distribution and QwQ-3B clustered with lower-performing models.

(A) Model family analysis: Overall performance by model family. Bar chart showing aggregated performance of models grouped by provider family, with Claude models demonstrating superior overall performance (mean = 21.47 ± 4.18) compared to other families. (B) Model family analysis: Dimensional performance by model family. Comparison of AI model families across five evaluation dimensions (Quality, Complexity, Relevance, Correctness, and Variety) on a 0–5 scale. showing ChatGPT models excelling in Correctness (4.77) despite low Quality scores (2.43), while Claude models demonstrated more balanced performance.

Principal Component Analysis of LLM performance in clinical scenario generation. Two-dimensional PCA plot visualizing clustering of models based on performance dimensions, with the first principal component (49.69% of variance) primarily associated with Quality and Complexity dimensions.